All Categories

Featured

Table of Contents

Amazon now usually asks interviewees to code in an online paper data. Currently that you understand what inquiries to expect, let's focus on exactly how to prepare.

Below is our four-step preparation plan for Amazon data researcher candidates. Before investing 10s of hours preparing for an interview at Amazon, you must take some time to make certain it's in fact the right company for you.

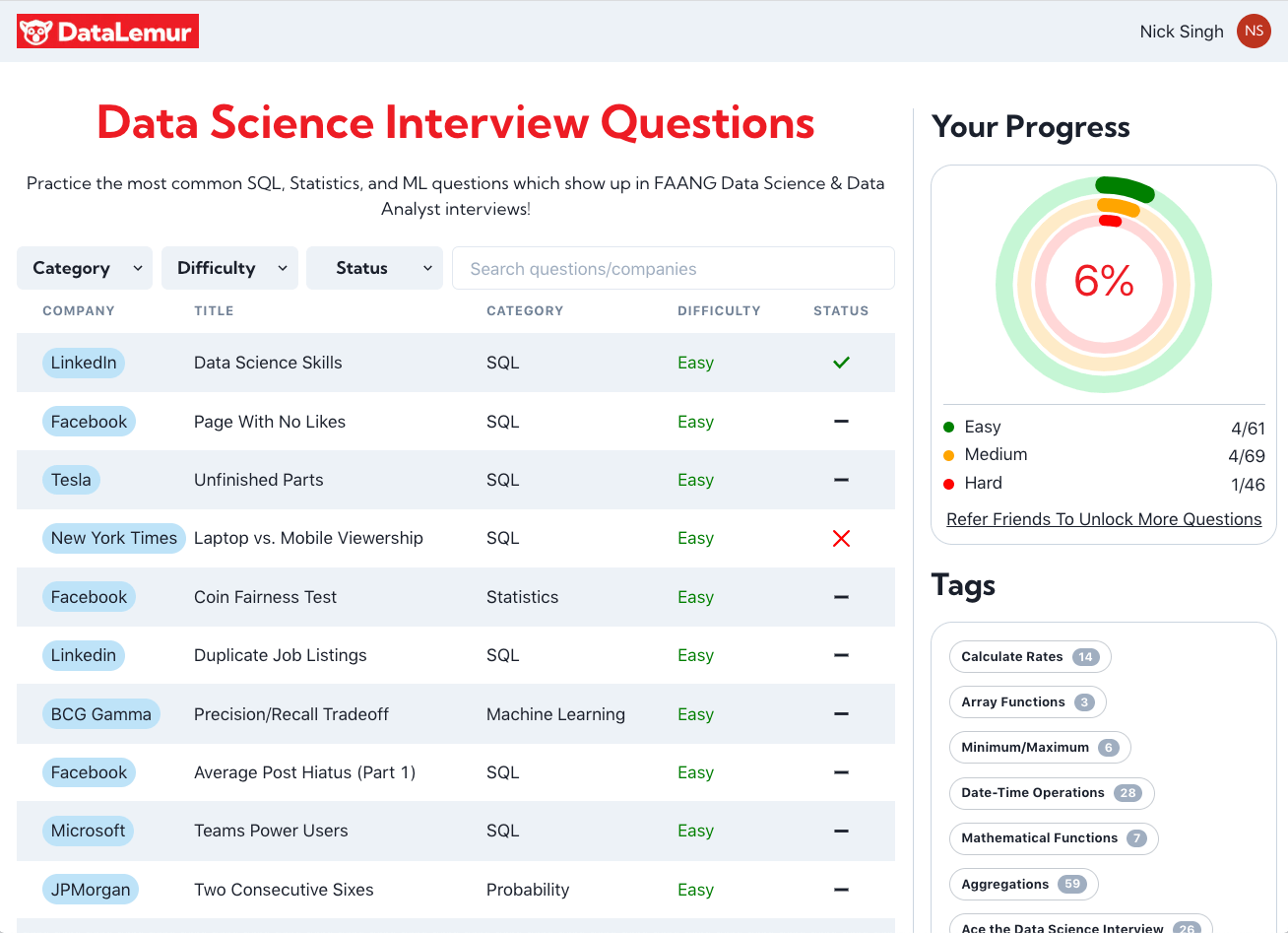

Exercise the method using example questions such as those in section 2.1, or those about coding-heavy Amazon positions (e.g. Amazon software application development designer meeting overview). Also, practice SQL and programming concerns with tool and hard degree examples on LeetCode, HackerRank, or StrataScratch. Have a look at Amazon's technical topics page, which, although it's made around software application development, need to offer you a concept of what they're keeping an eye out for.

Keep in mind that in the onsite rounds you'll likely have to code on a whiteboard without being able to perform it, so practice composing through problems on paper. Offers cost-free training courses around introductory and intermediate equipment knowing, as well as information cleansing, data visualization, SQL, and others.

Coding Practice For Data Science Interviews

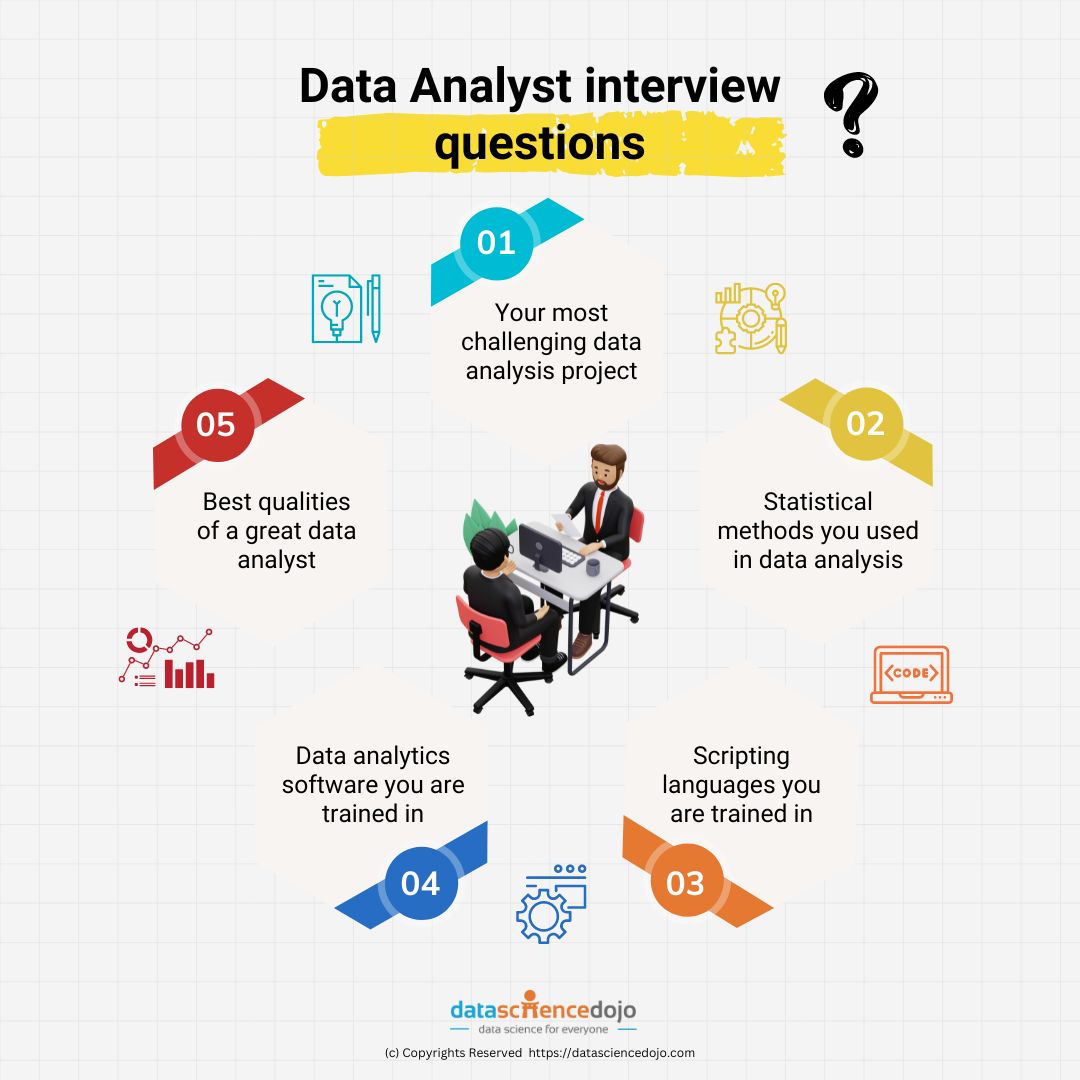

Make certain you contend the very least one story or instance for every of the principles, from a variety of positions and jobs. Ultimately, a great method to practice every one of these different sorts of concerns is to interview yourself out loud. This might sound unusual, yet it will significantly boost the means you connect your responses during an interview.

One of the major challenges of information researcher interviews at Amazon is interacting your various solutions in a way that's very easy to understand. As a result, we highly recommend practicing with a peer interviewing you.

Be advised, as you might come up versus the complying with troubles It's difficult to understand if the responses you get is exact. They're not likely to have expert understanding of interviews at your target business. On peer platforms, people frequently waste your time by disappointing up. For these reasons, many prospects skip peer mock interviews and go directly to mock interviews with a professional.

Preparing For Data Science Interviews

That's an ROI of 100x!.

Data Scientific research is fairly a large and varied area. As an outcome, it is really tough to be a jack of all trades. Commonly, Information Science would certainly concentrate on mathematics, computer technology and domain expertise. While I will quickly cover some computer technology fundamentals, the bulk of this blog site will mainly cover the mathematical essentials one might either require to review (and even take an entire training course).

While I understand most of you reading this are much more mathematics heavy naturally, recognize the mass of information scientific research (risk I claim 80%+) is accumulating, cleaning and handling data right into a helpful form. Python and R are one of the most popular ones in the Data Scientific research space. Nevertheless, I have additionally found C/C++, Java and Scala.

Google Interview Preparation

It is common to see the bulk of the data researchers being in one of 2 camps: Mathematicians and Data Source Architects. If you are the 2nd one, the blog won't assist you much (YOU ARE CURRENTLY OUTSTANDING!).

This may either be collecting sensor data, analyzing sites or executing surveys. After collecting the data, it needs to be transformed into a functional type (e.g. key-value store in JSON Lines documents). Once the information is gathered and put in a usable style, it is necessary to do some information top quality checks.

Amazon Interview Preparation Course

Nevertheless, in cases of fraudulence, it is extremely common to have heavy class imbalance (e.g. only 2% of the dataset is actual fraud). Such details is necessary to pick the proper choices for function engineering, modelling and model assessment. For additional information, check my blog on Fraudulence Detection Under Extreme Course Discrepancy.

In bivariate analysis, each feature is compared to other attributes in the dataset. Scatter matrices allow us to find hidden patterns such as- attributes that must be crafted together- features that might require to be eliminated to avoid multicolinearityMulticollinearity is in fact a problem for multiple models like linear regression and therefore needs to be taken care of accordingly.

Imagine making use of internet usage information. You will certainly have YouTube customers going as high as Giga Bytes while Facebook Messenger customers make use of a couple of Huge Bytes.

One more concern is the usage of categorical worths. While categorical values are usual in the information science globe, realize computer systems can only comprehend numbers.

Effective Preparation Strategies For Data Science Interviews

At times, having also many sparse dimensions will hamper the performance of the design. An algorithm typically utilized for dimensionality decrease is Principal Elements Analysis or PCA.

The typical categories and their sub groups are clarified in this section. Filter techniques are normally used as a preprocessing action. The selection of features is independent of any type of maker finding out algorithms. Instead, features are picked on the basis of their ratings in numerous analytical examinations for their connection with the result variable.

Common methods under this group are Pearson's Connection, Linear Discriminant Analysis, ANOVA and Chi-Square. In wrapper techniques, we attempt to utilize a part of features and educate a design using them. Based on the inferences that we attract from the previous version, we make a decision to include or get rid of attributes from your subset.

Google Data Science Interview Insights

Usual techniques under this category are Ahead Option, Backward Elimination and Recursive Feature Elimination. LASSO and RIDGE are typical ones. The regularizations are provided in the formulas listed below as recommendation: Lasso: Ridge: That being stated, it is to recognize the mechanics behind LASSO and RIDGE for meetings.

Unsupervised Learning is when the tags are inaccessible. That being stated,!!! This error is enough for the interviewer to terminate the interview. Another noob error individuals make is not normalizing the features before running the version.

For this reason. Policy of Thumb. Straight and Logistic Regression are the a lot of fundamental and frequently used Equipment Knowing formulas out there. Prior to doing any kind of evaluation One usual meeting mistake people make is starting their evaluation with a more complicated model like Semantic network. No uncertainty, Semantic network is very precise. Standards are important.

Latest Posts

The 100 Most Common Coding Interview Problems & How To Solve Them

What Are The Most Common Faang Coding Interview Questions?

The Best Free Ai & Machine Learning Interview Prep Materials